The AI ROI Productivity Paradox: Why Your 30% Velocity Boost Never Happened

At Patient Zero, we warned last year that chasing AI ROI without a mature AI-assisted delivery strategy would lead to a "Productivity Paradox." You cannot just turn on Copilot for your developers and expect a 300% increase in business value.

"We would look at customers that said, 'we’ve rolled out AI, we’ve switched on Copilot for everybody.' And then we would go and have a look at what they were using it for. Mostly auto-complete."

Fast forward to today. 2026 is the year of the reckoning. According to the latest data (Dataiku/Harris Poll 2026), 71% of CIOs believe they have until mid-2026 to prove AI value or face budget cuts. They are terrified because they spent millions on Copilot, but their teams are producing more bugs, not more features.

Here is the connection between the "Speed" you bought and the "Value" you lost.

The Reality Check: The Data on AI ROI in 2026

To understand why the ROI isn't there, you have to look at the math of a developer's day.

The "16 Minute" Math Problem

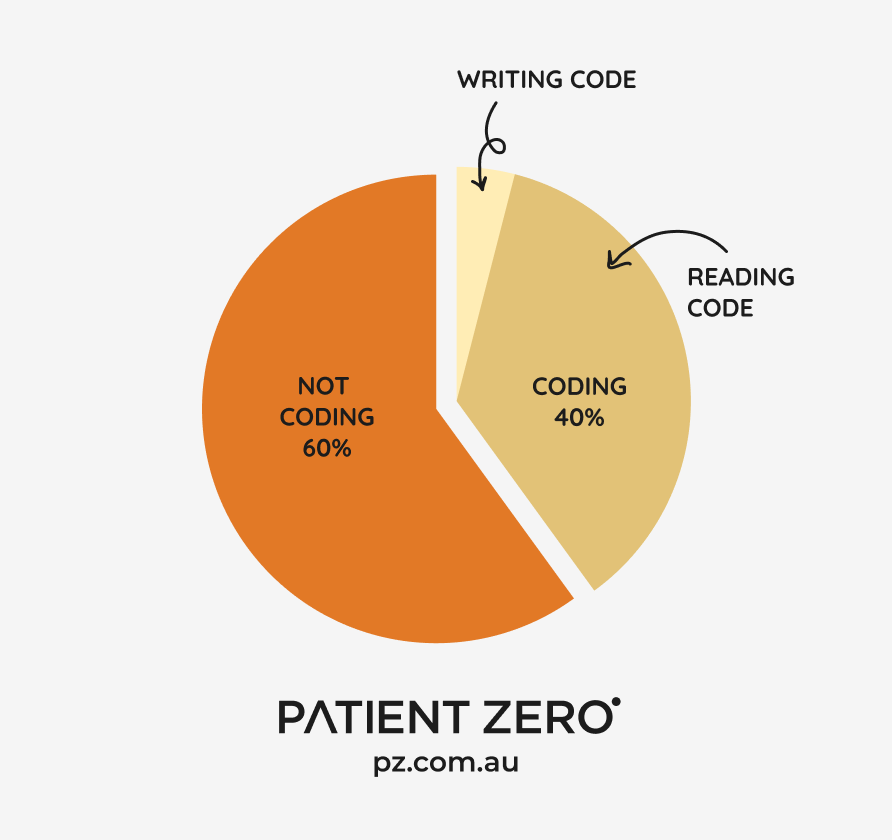

Developers spend only about 40% of their time coding, and 60% of their time on non-coding activities; code reviews, testing, collaboration, getting coffee. Of that 40% coding time, around 90% is reading code, with only 10% devoted to actually writing it.

So, on an 8-hour day, they spend 16 minutes actually writing code.

If AI doubles their typing speed, you’ve saved... 8 minutes.

What do you think they are doing with those extra minutes? They aren't building more features. As we will see, they are likely using them to fix the bugs the AI just created.

Root Cause 1: The "Negative Expertise" Trap

AI ROI fails because AI rises to the competence of the user. Just because your developer has 10+ years of experience, doesn’t mean they’re competent at auditing AI.

AI is like a very smart PhD student, but it needs someone to question the code it produces; someone who can "smell" when something isn’t quite right. There are 3 concepts that relate to this:

- Meno's Paradox

- Unknown Unknowns

- Negative Expertise

All of these sum up to one thing: You need to know when you don’t know something.

The AI Drag

The AI Drag is a phenomenon where junior developers using AI take longer to complete tasks because they lack the expertise to validate the output. Scott Hansleman (VP Developer Community, Microsoft) spoke to this on the Distributed Podcast. He is seeing the inverse of the apprenticeship model. Juniors know just enough to be dangerous.

It feels counterintuitive. Juniors are using AI but getting stuck. It generates something wrong, and they didn't "smell" it because they don't have the experience to spot it. It wasted your afternoon because they created a bunch of junk.

The Comprehension Gap

At Patient Zero, we have a few steps in our recruitment process to ensure cultural and technical fit. We conduct code challenges and in-person sessions. In a recent developer intake, we had all software developers explain their code to us and the reason behind their decisions.

This doesn't mean the other 55% wrote the code from scratch. But whether they used AI or not, comprehension is the metric that matters.

How many organisations hire developers based on a code challenge, but never actually ask them to explain why they wrote it that way?

Root Cause 2: The Hidden Cost of Low Quality

Paying your good developers to spend more time fixing up other people’s code is a waste of ROI.

In Harness’s “The State of Software Delivery 2025”, it identified that while AI speeds up initial development, it’s causing more issues down the pipeline:

- 67% of developers spend more time debugging AI-generated code.

- 68% of developers spend more time resolving security vulnerabilities.

- 59% of developers have problems with deployments using AI tools.

We are seeing human nature kick in. We are geared to reserve energy. It’s easy to rely on the AI. Great, I can just give it some prompts, copy-and-paste the code, and get back to playing my game. Even the most astute developers check once, twice, three times... and then think, "Well, I can trust it now." The fourth time is when it hallucinates and creates a mess.

Root Cause 3: Organisational Immaturity

We tend to go 2-3 times the speed of other teams at Patient Zero, even without the use of AI-assisted development. That’s down to the practices of our Embedded Team (link) model.

Most of the time, it's not the team itself slowing down; it’s the organisation around it. If you’re just activating Copilot and expecting a performance increase, think again.

These are the most common things we see hindering teams:

- Meeting overload: Kills flow time.

- Big teams: You want a pizza-sized team (5 is the perfect number).

- Lack of Test Automation: You need quality gates to immediately indicate if your faster code generation broke anything.

- Confusing Architectural Patterns: Work that should be repeatable becomes a "tech adventure."

- Too many cooks: A team of seniors spending more time debating tooling than shipping.

The "Burnout Baseline"

There is another invisible place your ROI went: Paying back cultural debt.

Before AI, many organisations were running on "fake velocity." According to Harness, 88% of developers report working more than 40 hours a week, with burnout at record highs.

If your team was only hitting their deadlines because they worked nights and weekends, your baseline was broken. When you introduced AI, they didn't use the time savings to do more work; they used it to stop working for free.

"If you are wondering, ‘where is my performance improvement here’, maybe some developers are just taking their time back that they didn’t have before."

You didn't pay for increased performance. You paid to stop your best engineers from quitting. That is valuable, but it doesn't show up on a feature spreadsheet.

Root Cause 4: Fear of Adoption

Finally, we have to address the elephant in the room: Fear.

People are genuinely afraid of what is coming. It is predicted that within 18 months, the majority of white-collar work will be automated. We’ve heard stories in organisations where leadership asks their teams to get in there, and figure it all out. You have those who do nothing (freeze), those who play around and realise holy s**t, and tell their bosses AI isn’t ready yet (run).

There are organisations who are getting their teams to automate their own jobs, which is a horrible feeling.

The "Writing on the Wall"

Take the recent Commonwealth Bank (CBA) case. A team was tasked with automating their own workflows, only to be made redundant once the project was "complete." But the story didn't end there, the bank had to rehire them because the automated system couldn't handle the complexity or the volume without human oversight.

For many staff, this isn't just "enablement", it is the writing on the wall. They see that if they succeed in automating their job, their reward is a pink slip.

Anthropic’s staff have come out saying the same thing with the level of automation they are doing there with coding and co-assist tools.

The Evolution: Moving from Copilot to

Agentic AI

The industry is rapidly shifting from AI Augmentation (Copilot) to Agentic AI. This isn't just a software upgrade; it is a fundamental shift in the operating model that most teams are not ready for.

Agentic AI is not Copilot. Copilot is an augmentation tool; a "force multiplier" for the human hands on the keyboard. Agentic AI is different; it is an autonomous loop. The idea is that you are no longer the "Writer"; you are now the Orchestrator; the Master in the "Master and Apprenticeship" model.

You point your agents in the direction you want to go, monitor them, and ensure there is a quality output. But because Agentic AI operates in a "black box" (making its own decisions to fix errors), it introduces new risks that Copilot never did.

The Critical Difference in Workflow

Here is the critical difference in how the two solve problems:

| Feature | AI Augmentation (Copilot) |

Agentic AI |

|---|---|---|

| Trigger | Prompt ("Help me fix this") | Goal ("Fix this bug") |

| Failure Mode | Returns error to human | Retries with new strategy |

| Example Steps |

|

|

| Sovereignty Risk | Low(Human sees the output) | High(Agent acts when you sleep) |

Conclusion: Do You Have the Capability?

If your developers are using AI in either case, it is their responsibility to own the code that is produced.

“It’s all about how we do it, how we make sure we put those guard rails in place. We are not introducing any security vulnerabilities. We are still responsible for the code we are pushing up to our repos.”

Bad code is still bad code. The way you orchestrate vs. engineer is the new world we are adapting to.

Do you have the right gates, processes, and people to make sure you don’t get yourself into a further mess? Are you sure you have the right Orchestrators to succeed in this new world?

Turn Strategy into Sovereignty

Patient Zero bridges the gap between AI hype and engineering reality by mapping these sovereign trends directly to your engineering roadmap:

- The Strategy: Don't let AI generate technical debt. Build sovereign assets you own and understand with Custom Software Development.

- The Workforce: Need "Orchestrators" to audit the AI, not just coders to copy-paste it? Augment your capability with our Embedded Software Teams.

- The Infrastructure: Stop paying the "Cloud Tax" on bloated code. Refactor your estate for efficiency and security with Application Modernisation.

Demelza Green is the Co-CEO of Patient Zero and 10,000 Spoons. A Women in Digital UX Leader of the Year and ARN Innovation Award winner, she sits at the intersection of human experience and technical reality.

Fresh from the global conference circuit (CES, GITEX, Web Summit), she is focused on helping Australian enterprise software leaders navigate the shift from "Global Efficiency" to "Sovereign Resilience."

Her goal? To help Australian enterprises stop renting their future and start building it.